Operationalizing Predictive Grand Strategy: Building National Security Indexes and Micro-Models for Strategic Synchronization

Abstract

This article is the third paper in a three-part research series that advances the case for restoring American strategic coherence through a measurable, predictive grand strategy system. The first paper established the strategic requirement for a formal Grand Strategy Directive (GSD) that defines enduring U.S. national interests and synchronizes the instruments of national power across government. The second paper provided the methodological foundation for that directive by integrating DIMEFIL, PMESII-PT, and ASCOPE into a unified analytic spine capable of aligning interagency planning, indicators, and decision rhythms.

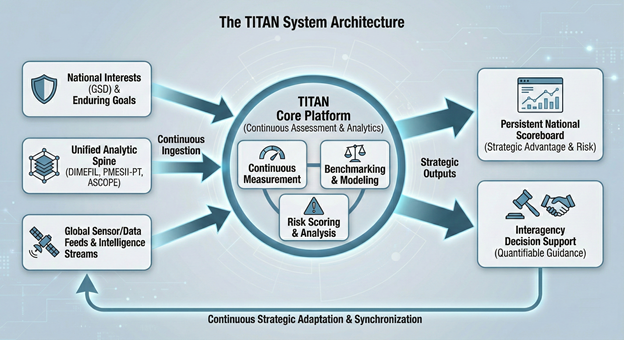

This third paper operationalizes that framework by proposing TITAN (Total Integrated Threat Assessment Network), a national-level decision support tool designed to measure, monitor, and score U.S. national interests continuously. TITAN enables national interests to be translated into quantifiable benchmarks, assigned to responsible lead and supporting agencies, and evaluated against competitor performance through strategic indexes and domain-specific micro-models. By producing a persistent national scoreboard of advantage, risk accumulation, and progress toward objective outcomes, TITAN closes the critical gap between strategic intent and measurable execution.

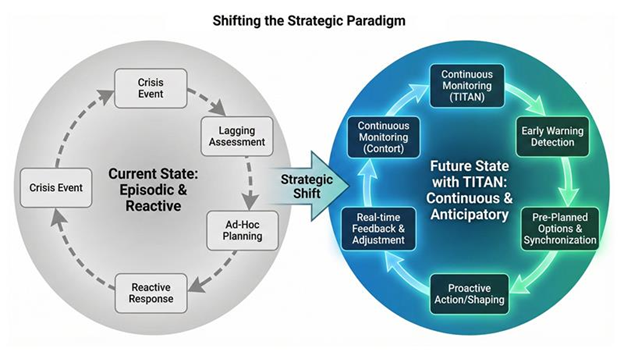

Together, the system enables continuous strategic assessment, early warning, and interagency synchronization, transforming U.S. strategy from episodic narrative planning into a data-driven architecture for anticipatory governance and national decision superiority.

Illustrative applications include strategic indexes addressing rare earth supply security, semiconductor dependency, artificial intelligence competitiveness, quantum resilience, space domain stability, Taiwan Strait deterrence stability, and national resilience. Together, these mechanisms form an anticipatory governance architecture capable of adapting as competitive conditions evolve. The article concludes that without such a system, the United States remains structurally reactive in an era defined by sustained great power competition and accelerating technological change.

The TITAN System Architecture: Continuous Assessment, Benchmarking, and Strategic Outputs

Note. This figure introduces TITAN (Total Integrated Threat Assessment Network) as the national-level decision-support platform that operationalizes predictive grand strategy. It shows how national interests, unified analytic frameworks, and global sensing streams feed continuous measurement, benchmarking and modeling, and risk scoring and analysis to produce strategic outputs such as a persistent national scoreboard and interagency decision support.

1. Introduction

The United States is entering a decisive era of strategic competition in which national power is being contested every day, across every domain, and often below the threshold of open war. The defining characteristic of this environment is not simply danger. It is speed. Economic coercion, cyber disruption, influence operations, technology competition, supply chain vulnerability, space-domain interference, and military intimidation now unfold continuously, shaping outcomes long before traditional warning systems register a “crisis.” While the United States still possesses unmatched capability, it increasingly lacks something more important than strength. It lacks a unified mechanism to measure strategic performance, detect cumulative risk, and synchronize national power fast enough to compete.

China demonstrates why this matters. The People’s Republic of China is not competing episodically. It is executing an integrated, long-horizon campaign designed to shift the balance of power through coordinated diplomatic pressure, industrial dominance, financial leverage, technology acquisition, information control, and military modernization. China’s approach fuses state power into a coherent system. It applies economic influence through trade dependencies and strategic minerals control. It targets technological advantage through artificial intelligence, quantum research, semiconductor positioning, and data access. It contests narratives globally through influence operations and media shaping. It pressures allies and partners through gray zone tactics and persistent intimidation in contested regions. The cumulative effect is strategic momentum. Each individual action may appear manageable, but together they compound into structural advantage.

By contrast, the U.S. national security enterprise remains largely organized for periodic planning rather than continuous competition. National strategies, defense reviews, intelligence estimates, and budget cycles are updated in timelines measured in months or years. Departments and agencies often operate on separate rhythms, pursue objectives through disconnected metrics, and assess threats through stove-piped lenses. This structure produces a predictable weakness. The United States can respond forcefully to crises, but it struggles to measure whether it is winning the competition before crises occur.

This paper is the third in a research series arguing that American strategic coherence must be rebuilt as a measurable national capability. The first paper established the requirement for a Grand Strategy Directive (GSD) that articulates enduring national interests and aligns the instruments of national power across government. The second paper provided the methodological foundation for that directive by integrating DIMEFIL, PMESII-PT, and ASCOPE into a unified analytic architecture. This third paper addresses the central unresolved question: how can grand strategy be operationalized as a continuous national function rather than an episodic document?

The answer proposed here is TITAN (Total Integrated Threat Assessment Network), a national-level decision support tool designed to measure the status of U.S. national interests in real time, assign ownership of each interest to responsible agencies, and evaluate progress against strategic competitors such as China. TITAN enables national interests to be translated into quantifiable benchmarks, tracked through strategic indexes and domain-specific micro-models, and displayed as a persistent national scoreboard of advantage, risk accumulation, and trajectory. By connecting strategic intent to measurable execution, TITAN provides the missing operational layer that makes grand strategy enforceable, assessable, and adaptable.

In an era where China competes through integrated national power and continuous pressure, the United States requires more than policy statements and episodic reviews. It requires a system that measures national performance, reveals strategic drift early, and synchronizes interagency action toward objective outcomes. TITAN is designed to provide that system.

Shifting the Strategic Paradigm: From Episodic and Reactive to Continuous and Anticipatory Competition

Note. This figure contrasts the current U.S. model of crisis-driven response, lagging assessment, and ad hoc planning with a TITAN-enabled future state characterized by continuous monitoring, early warning detection, real-time feedback and adjustment, and preplanned options and synchronization. It supports the paper’s claim that strategic advantage increasingly belongs to systems that detect risk accumulation early and coordinate action faster than competitors.

2. Literature Context and the Analytical Gap

The need for a measurable, predictive grand strategy architecture is urgent because the United States is now competing in an environment where strategic advantage shifts daily, often without a single shot fired. China is not waiting for wars to win outcomes. It is building decisive leverage through AI dominance tied to compute, semiconductors, and energy; through power grid and infrastructure vulnerability that can be exploited below the threshold of conflict; through rare earth minerals and supply chain choke points that function as coercive terrain; and through continuous pressure on Taiwan using layered military intimidation, cyber disruption, economic coercion, and narrative warfare designed to fracture decision-making before a crisis is formally declared. In this environment, episodic strategy documents, disconnected agency metrics, and slow interagency coordination are not merely inefficient. They are strategically dangerous. If the United States cannot continuously measure national interests, assign ownership across government, and score real-time progress against China’s competitive momentum, it will remain reactive by design, recognizing strategic defeat only after it has already accumulated. Parallel bodies of research examine systems thinking, complex adaptive systems, and networked governance in national security contexts. These studies highlight nonlinear competition dynamics and the limitations of linear planning models. However, they often remain abstract or are confined to specific domains such as operational planning or intelligence fusion, limiting their applicability at the national strategic level (As described in papers 1 and 2).

A third strand of literature examines analytic frameworks such as DIME, PMESII, and ASCOPE (as shown in paper 2) . These frameworks are widely used across the United States government to structure environmental analysis and operational planning. In practice, they are frequently applied in isolation or as checklists supporting discrete planning efforts.

A practical example illustrates the gap and why a predictive architecture must be institutionalized rather than improvised. Consider a Taiwan Strait coercion campaign executed by China without crossing the threshold of invasion. In this scenario, China does not begin with amphibious assault indicators. Instead, it initiates a coordinated pressure sequence designed to fracture political will, degrade economic confidence, and constrain U.S. and allied decision space while maintaining plausible deniability.

The campaign begins with synchronized actions across multiple domains. China increases maritime and air activity near Taiwan to normalize elevated military pressure while remaining below the level that triggers traditional crisis response mechanisms. Simultaneously, Chinese state-linked cyber actors intensify probing of Taiwan’s critical infrastructure, focusing on energy distribution, port logistics, and telecommunications reliability. In parallel, Chinese information operations amplify narratives portraying U.S. security guarantees as unreliable, framing Taiwan’s economy as unstable, and pushing themes intended to weaken public confidence and investor sentiment. At the same time, China applies economic leverage through selective trade pressure, regulatory harassment of targeted firms, and informal coercion against multinational corporations operating in Taiwan or supporting Taiwan-linked supply chains. None of these actions alone constitutes “war,” but together they create compounding strategic effects.

Under current U.S. processes, these developments are often assessed in parallel but not fused into a single measurable strategic picture. The Department of Defense may focus on force posture and deterrence indicators. The Intelligence Community may assess intent and warning. Treasury and Commerce may track market and trade vulnerabilities. State may manage alliance signaling and diplomatic posture. DHS and CISA may observe cyber risk patterns. Each organization can perform well within its lane while the national system still fails to answer the most important question: Is the United States and its coalition gaining or losing strategic advantage, and how close is the situation to an irreversible shift in conditions?

Continuous Measurement as the Missing Link Between Cross-Domain Signals and National Strategic Judgment

Note. This figure reinforces the analytical gap identified in the paper by illustrating why parallel assessments across agencies are insufficient without a unified measurement and synchronization mechanism. It supports the argument that TITAN converts multi-domain signals into a single integrated national picture by tying indicators to national interests, ownership, and threshold-based scoring.

TITAN resolves this by converting the scenario into an integrated measurement problem tied to national interests, ownership, and thresholds. First, the Grand Strategy Directive defines enduring interests relevant to this scenario, such as preserving Taiwan Strait deterrence stability, maintaining semiconductor supply chain resilience, sustaining alliance cohesion, and protecting U.S. economic and information security from coercive disruption. TITAN then assigns lead and supporting responsibility for each interest to specific agencies. For example, deterrence stability may be led by the Department of Defense with support from the Intelligence Community and State. Semiconductor resilience may be led by Commerce with support from Treasury, DOE, and DoD. Alliance cohesion may be led by State with support from DoD and the Intelligence Community. Cyber and infrastructure resilience may be led by DHS and CISA with support from FBI and Cyber Command.

Second, TITAN translates each interest into quantifiable benchmarks and continuously updated indicators. Deterrence stability is measured through indicators such as changes in PLA readiness patterns, missile posture signals, maritime density in key corridors, allied operational tempo, and escalation messaging patterns. Semiconductor resilience is measured through indicators such as shipping and insurance disruptions, supply chain choke-point stress, Taiwan production continuity risk, adversary export control behavior, and allied inventory posture. Information environment integrity is measured through indicators such as coordinated narrative amplification, bot-network surges, sentiment degradation among key audiences, and media ecosystem penetration. Financial stability and coercion exposure are measured through capital flow anomalies, insurance pricing spikes, currency volatility, and adversary-linked economic pressure signals.

TITAN Scoreboard Logic: Trendlines, Thresholds, and Cross-Domain Convergence

Note. This figure supports the Taiwan Strait coercion scenario by illustrating how converging military, cyber, economic, and information indicators can be aggregated into strategic indexes that shift from green to yellow to red. It reinforces the paper’s claim that TITAN enables earlier warning and faster interagency coordination by displaying strategic posture as trendlines and threshold triggers rather than episodic snapshots.

Third, TITAN aggregates these indicators into a national scoreboard that shows strategic posture as trendlines, not episodic snapshots. The Taiwan Deterrence Stability Index may shift from green to yellow based on sustained convergence across military pressure, cyber probing, and narrative escalation. A Supply Chain Coercion Index may deteriorate as shipping risk rises and investor confidence drops. An Alliance Cohesion Index may weaken as partner states signal hesitation, domestic political friction rises, or competing narratives gain traction. The scoreboard does not replace judgment. It forces clarity by showing when multiple domains are moving in the same adverse direction, how fast the deterioration is occurring, and which national interests are being compromised.

Finally, TITAN produces decision-relevant outputs by linking measurement to interagency options. When thresholds are crossed, the system triggers a structured review, identifies which agencies own the relevant levers, and presents integrated response packages rather than disconnected recommendations. Diplomatic signaling, posture adjustments, export control enforcement, sanctions preparation, cyber defense hardening, allied coordination measures, and information counter-messaging are sequenced as a unified campaign. This converts grand strategy from narrative aspiration into a measurable system of anticipatory governance. It also reduces response latency by revealing risk accumulation early, before a crisis becomes irreversible.

This example demonstrates the central contribution of the architecture proposed in this article. The analytical gap is not that the United States lacks expertise or capability in any single domain. The gap is that it lacks a persistent, cross-domain measurement and synchronization system that translates national interests into benchmarks, assigns accountability, and continuously scores progress against strategic competitors such as China. TITAN is designed to close that gap.

3. Analytical Propositions for Predictive Grand Strategy

To support evaluation and policy testing, this article advances five propositions.

Proposition 1: Predictive grand strategy architectures that integrate cross-domain indicators reduce strategic response latency compared to episodic assessment models.

Proposition 2: National strategic indexes improve interagency synchronization by providing shared measures of strategic conditions across the instruments of national power.

Proposition 3: Continuous strategic assessment improves early detection of gray zone escalation dynamics that are unlikely to trigger traditional warning mechanisms.

Proposition 4: Department of War oriented strategic framing improves clarity in national interest prioritization during sustained great power competition.

Proposition 5: Domain-specific micro-models (modular, agency-owned analytic components that translate a single national interest or competitive problem into measurable indicators, thresholds, and trend-based outputs that can be nested into national-level strategic indexes) increase precision and early warning sensitivity without sacrificing strategic coherence when nested within a unified national assessment architecture.

Micro-models are intentionally narrow, high-resolution building blocks that make grand strategy executable. They convert abstract strategic priorities into measurable realities by tracking discrete competitive problems such as rare earth processing dependency, semiconductor chokepoints, AI compute advantage, power grid resilience, space-domain stability, or Taiwan Strait deterrence degradation. Their value is not theoretical. It is operational. Without micro-models, national strategy remains a set of intentions measured through periodic narrative reviews. With micro-models, national interests become auditable benchmarks, updated continuously, and tied to decision thresholds.

Equally important, micro-models are designed to be LEGO-like and modular. They can be built quickly, improved iteratively, stress-tested against real events, and replaced as conditions evolve, without breaking the national architecture. This enables agency-specific ownership and accountability. Commerce can maintain micro-models for industrial capacity and supply chain exposure. Treasury can maintain micro-models for coercive finance, sanctions performance, and adversary capital flows. DoD can maintain micro-models for deterrence stability, readiness, and force posture indicators. DHS and CISA can maintain micro-models for cyber and infrastructure risk. The Intelligence Community can maintain micro-models for adversary intent signals, mobilization indicators, and escalation trajectories. When these agency-level micro-models feed upward into shared national strategic indexes under common standards for indicators, weighting, thresholds, and reporting rhythms, the United States gains something it currently lacks: a persistent national scoreboard that is both detailed enough to be actionable and unified enough to drive synchronized whole-of-government decisions.

These propositions are not deterministic predictions. They establish a testable foundation for assessing whether a continuous predictive architecture improves strategic awareness, reduces response latency, and strengthens interagency coordination when compared to episodic, stove-piped planning processes.

4. Integrated Framework Architecture

Operationalizing predictive grand strategy requires transforming analytic frameworks from descriptive tools into components of a unified national assessment system. This article integrates three established frameworks, each serving a distinct function.

To ensure clarity for readers outside the national security planning community, the three frameworks used in this architecture are defined in practical terms:

- DIMEFIL describes the tools the United States can use to compete and influence outcomes. It is the menu of national power, ranging from diplomacy and information to military force, economic and financial leverage, intelligence, and law enforcement action.

- PMESII-PT describes the environment the United States is operating in. It is a structured way to assess what is changing in a competitor’s system and the broader world across political, military, economic, social, information, infrastructure, physical terrain, and time dynamics.

- ASCOPE describes the real-world actors and observable features inside that environment. It converts abstract analysis into measurable reality by focusing on specific areas, structures, capabilities, organizations, people, and events that can be tracked and assessed over time.

In simple terms: PMESII-PT explains what is happening and why it matters, ASCOPE shows where it is happening and who is involved, and DIMEFIL defines what the United States can do about it.

TITAN Integrated Assessment Overview: National Interests, Framework Fusion, and Scoreboard Outputs

Note. This figure depicts TITAN’s integrated assessment architecture by nesting U.S. national interests and benchmarks into PMESII-PT strategic environment assessment, ASCOPE observable entities and actions, and DIMEFIL instruments of national power. It supports the paper’s argument that predictive grand strategy becomes executable when frameworks are fused into a measurable system that produces scoreboards and alerts for decision advantage.

Rather than applying these frameworks as parallel checklists, the proposed architecture nests them hierarchically.

First, national interests are articulated in functional terms aligned with DIMEFIL domains. Second, each interest is assessed across PMESII-PT variables to capture systemic conditions and trends. Third, ASCOPE elements identify measurable indicators, organizations, and events that operationalize assessment.

This nested integration enables strategic questions to flow downward from national interests to indicators, and upward from data to strategic judgment. The result is an architecture capable of continuous update without requiring constant revision of strategic documents. The framework creates a common analytic language that supports interagency participation while preserving domain expertise.

5. Predictive Architecture Design

The predictive architecture proposed in this article functions as a national sensing and decision support system. It is designed to monitor competitive dynamics continuously, identify deviations from baseline conditions, and support anticipatory decision making.

The architecture consists of three core components:

- National strategic indexes aligned to quantified national interests

- Domain-specific micro-models that produce high resolution analysis

- Governance mechanisms that synchronize assessment cycles and decision rhythms

Data flows from agency level analysis into shared indicators, which are aggregated into indexes tied to national interests. Outputs inform senior leadership through trend analysis, threshold alerts, and structured assessments rather than automated prescriptions.

This design avoids deterministic claims of prediction. It emphasizes probabilistic awareness and early warning. Strategic judgment remains a human responsibility, supported by analytic infrastructure rather than replaced by it.

6. National Interests as Quantifiable Benchmarks

Strategic documents frequently rely on narrative objectives such as maintain access, preserve deterrence, or ensure advantage. These phrases provide direction but do not define sufficiency. Competition at machine speed requires measurable thresholds.

Illustrative benchmarks include:

- Maintain at least 40 percent secure refining access to global rare earth capacity outside adversary control

- Ensure 70 percent of advanced node semiconductor production remains within United States or allied territory

- Preserve a three to one advantage in ISR platforms in key maritime domains relative to peer competitors

- Maintain at least 95 percent integrity of United States space based positioning, navigation, and timing under stress conditions

- Retain at least 51 percent global share of foundational AI model training capacity measured by compute, talent, and data

- Achieve quantum resilient encryption for at least 90 percent of government communications by a defined date

- Limit dependency on any single adversarial nation to no more than 20 percent of critical supply chains in targeted categories

These benchmarks are illustrative rather than prescriptive. Their purpose is to demonstrate how national interests can be made auditable and continuously monitored.

National Strategic Index Structure: Composite Measures for Senior-Leader Decision Support

Note. This figure supports the paper’s index framework by illustrating how multiple indicators can be weighted, aggregated, and interpreted as a national strategic index tied to a defined interest benchmark. It reinforces the argument that indexes translate complex competitive conditions into interpretable signals that enable trend recognition, threshold-based review, and interagency synchronization.

7. National Strategic Index Construction

National strategic indexes serve as composite measures of priority national interests. Each index integrates multiple indicators drawn from relevant DIMEFIL domains and assessed through PMESII-PT lenses. Indicators are weighted by strategic relevance rather than data availability alone.

Indexes serve three functions.

First, they translate complex strategic conditions into interpretable signals for senior leaders.

Second, they enable trend analysis by highlighting directional movement rather than static snapshots.

Third, they provide an interagency synchronization mechanism by aligning diverse activities toward shared outcomes.

Thresholds associated with each index prompt review rather than automatic action. When thresholds are crossed, leaders are alerted to reassess assumptions, resource allocation, or posture.

At minimum, the following indexes are required to operationalize predictive grand strategy.

Illustrative Dashboard Concept for Continuous Monitoring, Trend Analysis, and Early Warning

Note. This figure provides an example of how TITAN outputs can be displayed through dashboard visualization to support continuous monitoring of indicators, trendlines, and alert states. It supports the paper’s claim that predictive architecture must present a persistent national scoreboard that is interpretable, auditable, and useful for early warning and coordinated action.

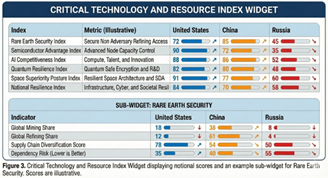

7.1 Strategic Power Index (SPI)

A composite measure across DIMEFIL domains that provides a single summary score reflecting relative advantage against peer competitors. Subscores preserve fidelity while the composite focuses leadership attention.

7.2 Economic Security and Supply Chain Coercion Index

Measures import dependencies, chokepoint vulnerabilities, energy diversification, strategic minerals security, semiconductor resilience, and maritime logistics risk. It detects early signs of economic coercion, supply chain manipulation, or strategic dependence.

7.3 Artificial Intelligence Competitiveness Index (AICI)

Tracks compute capacity, access to training data, model performance benchmarks, density and retention of AI researchers, government integration of AI into operations, private sector innovation, and governance safeguards.

7.4 Quantum Resilience Index (QRI)

Evaluates adversary breakthroughs, United States research progress, quantum resilient cryptography adoption, encryption vulnerability, and quantum sensing and communications development.

7.5 Space Superiority Posture Index (SSPI)

Measures satellite resilience, anti-satellite threat patterns, PNT integrity, cislunar competition dynamics, launch capacity, commercial dependencies, and space domain awareness.

7.6 National Resilience Index (NRI)

Integrates infrastructure redundancy, grid restoration time, cyber defense posture, emergency response capacity, public trust, information environment integrity, and financial system stability under stress.

8. Methodological Notes for Index and Model Construction

A predictive grand strategy architecture rises or falls on methodological credibility. Senior policy leaders require interpretability, while academic and oversight communities require rigor, auditability, and restraint. This section provides a practical methodological approach that is sufficiently detailed for scholarly review while remaining usable for implementation.

8.1 Indicator Selection Criteria

Indicators should be selected based on strategic relevance rather than convenience. Each candidate indicator should meet four criteria.

Relevance: The indicator must map directly to a quantified national interest benchmark. If it does not change decision making, it does not belong in the index.

Observability: The indicator must be measurable consistently over time using reliable sources, including government reporting, vetted commercial data, allied reporting, or validated open sources.

Sensitivity: The indicator must change in meaningful ways when competitive conditions change. Indicators that remain static or lag too long reduce early warning value.

Actionability: The indicator must connect to policy levers or operational actions. Indicators that cannot be influenced by any instrument of national power should be treated as contextual variables rather than core index components.

These criteria prevent indexes from becoming academic catalogs and ensure that each component supports decision relevance.

8.2 Normalization and Scaling

Most indicators exist on different scales. Some are percentages, some are counts, some are financial quantities, and some are qualitative proxies. To combine them, the system requires normalization.

A practical approach is to scale each indicator to a 0 to 100 score using either:

- Min-max normalization based on historical and competitive ranges

- Z score standardization for anomaly detection and deviations from baseline

- Benchmark anchored scaling where 100 equals the target national interest benchmark and values below reflect deterioration

Benchmark anchored scaling is particularly useful for policy audiences because it ties the number to a defined condition of sufficiency.

8.3 Weighting Strategy

Weighting is the most contested element of index design. It also becomes one of the most powerful mechanisms for forcing interagency alignment.

Weights should be derived through a structured method rather than subjective preference. A practical approach includes:

- Expert elicitation using multi agency panels to rank indicators by strategic impact

- Historical back-testing to evaluate which indicators preceded known competitive inflection points

- Sensitivity testing to determine which indicators most influence the index under plausible scenarios

- Governance review to confirm that weights reflect the Grand Strategy Directive priorities rather than agency parochialism

Weights should be revisited on a defined cycle. Adjustments should be logged, justified, and auditable.

8.4 Baselines, Trendlines, and Leading Indicators

Indexes should not be interpreted as static scores. Their most important value is trend and acceleration.

Each index should produce:

- A current score

- A trendline over multiple windows such as 30, 90, 180, and 365 days

- A rate of change measure

- A volatility measure to detect destabilization

A separate designation should be maintained for leading indicators versus lagging indicators. Leading indicators enable anticipatory action. Lagging indicators validate outcomes and refine model training.

8.5 Thresholds and Alert Logic

Thresholds should be defined as review triggers rather than automatic action mandates. Threshold design should incorporate:

- Green status: within acceptable variance from benchmark

- Yellow status: sustained deterioration, or multiple leading indicators trending adverse

- Red status: benchmark failure, or convergence of multiple high risk indicators

- Watch status: competitor breakthrough indicators that do not yet degrade posture but may soon

Thresholds should be mathematically defined and linked to benchmarks. Each alert should automatically generate an explainability packet showing which indicators moved, how weights contributed, and how the score changed.

8.6 Validation and Back-Testing

Indexes and micro-models must be validated against reality. A validation cycle should include:

- Retrospective back-testing against known events such as sanctions campaigns, supply chain disruptions, military escalations, or technology breakthroughs

- Cross-model consistency checks to ensure that related indexes do not contradict each other without explanation

- Outcome tracking to determine whether policy actions improved relevant indicators, validating causal assumptions

- Red teaming by independent analytic groups to challenge indicator choice, weighting, and potential bias

This validation process strengthens credibility and reduces the risk of false precision.

8.7 Micro-Model Design and Nesting

National strategic indexes and micro-models serve complementary but distinct functions within TITAN. Indexes provide senior leaders with a stable, interpretable scoreboard that summarizes national posture against defined interests through a small number of composite measures, optimized for trend recognition, threshold alerts, and cross-domain prioritization. Micro-models, by contrast, provide high-resolution analytic depth on specific competitive problems that cannot be credibly represented through a single aggregate score alone. They isolate a discrete domain (for example, rare earth refining exposure, semiconductor chokepoints, AI compute capacity, Taiwan deterrence stability, or power grid vulnerability), define tighter causal assumptions, incorporate specialized data sources, and generate more sensitive leading indicators. In effect, indexes answer “Are we winning or losing, and how fast is it changing?” while micro-models answer “What exactly is driving the movement, where is the pressure accumulating, and which agency levers can change the outcome?” When micro-models are nested under shared benchmarks and feed upward into national indexes through standardized governance rules, the system gains both strategic coherence and analytic precision, avoiding the false choice between oversimplified dashboards and fragmented expert stovepipes. Each micro-model should specify:

- Purpose and benchmark linkage

- Inputs and data sources

- Causal assumptions and known limitations

- Outputs and decision levers

- Linkage to parent indexes

Nesting rules ensure that micro-models support strategic coherence rather than producing disconnected analytic outputs by forcing every micro-model to roll upward into national interests, national indexes, and NSC-level decision triggers in a disciplined chain of logic. For example, consider a China-driven rare earth coercion scenario: a Commerce-led micro-model monitors China’s refining dominance, export control signaling, shipping anomalies, and price manipulation indicators, while an allied diversification sub-model tracks non-China processing capacity growth and contract reliability. As those indicators deteriorate, the micro-model automatically updates the Economic Security and Supply Chain Coercion Index, which in turn affects the Strategic Power Index trendline and triggers a “yellow” threshold review at the national level. That review is not abstract. It elevates a defined national interest benchmark (secure non-China refining access) and assigns action ownership across agencies: Commerce drives industrial policy and export control enforcement, Treasury evaluates coercive finance and sanctions options, State coordinates allied sourcing agreements and diplomatic signaling, DoD assesses operational risk to precision munitions and key platforms, and the Intelligence Community refines intent and timing indicators. The NSC then receives a single integrated picture showing what China is doing, what U.S. national interest is being degraded, how fast risk is accumulating, which agencies own the levers, and what decision options exist before the coercion becomes irreversible. In this way, nesting turns micro-model outputs into measurable national-level consequences and converts scattered warning into synchronized, whole-of-government action against China’s competitive momentum.

8.8 Explainability, Auditability, and Governance

Any system informing national decisions must be explainable (And have the ability to be flexible to change – as the world changes every day). This includes machine learning components.

At minimum:

- Every index update must be reproducible

- Weight changes must be logged

- Data provenance must be traceable

- Model performance must be monitored for drift

- Oversight bodies must be able to audit outputs without requiring access to sensitive sources and methods

Explainability is not optional. It is the difference between an analytic tool and an untrusted black box.

9. Domain-Specific Micro-Models

Complementing national strategic indexes are micro-models that provide higher resolution analysis of discrete strategic problems. These models focus on specific sectors, regions, or capabilities and enable tailored variables, specialized data sources, and more sensitive leading indicators than an aggregate index can provide alone. Micro-models are the mechanism that makes predictive grand strategy operational by converting broad national interests into measurable, decision-relevant analytic components that can be owned by specific agencies and continuously updated.

A China-focused example illustrates their value. China rarely seeks advantage through a single dramatic action. It accumulates leverage through coordinated pressure across supply chains, technology, information, and regional posture. A rare earth coercion micro-model, for instance, can monitor China’s refining dominance, export control signaling, shipping disruptions, price manipulation patterns, and downstream impacts on U.S. defense manufacturing and advanced technology production. That micro-model can then feed upward into a national Economic Security and Supply Chain Coercion Index, providing early warning when China is moving from routine market influence to deliberate strategic pressure. Similarly, a Taiwan deterrence stability micro-model can track PLA activity patterns, maritime density, missile readiness signals, cyber probing, and narrative escalation indicators, allowing leaders to detect coercion trajectories before they appear as a conventional invasion warning.

Micro-models are modular by design. They can be built quickly, improved as conditions change, and retired when no longer relevant. Their outputs feed upward into national strategic indexes through standardized nesting rules, preserving strategic coherence while increasing analytic precision. In effect, micro-models provide the detailed “engine-room” analytics, while national indexes provide the senior-leader scoreboard that translates complexity into decision advantage against strategic competitors such as China.

The following subsections illustrate representative micro-models that demonstrate how TITAN converts priority national interests into measurable, continuously updated analytic components aligned to China’s most consequential pathways of competition and coercion.

9.1 Rare Earth Security Model (RESM)

Benchmark: Maintain at least 40 percent secure non PRC refining access

Indicators: mining and refining distribution, export control pressure, disruptions, choke points, coercion signals

Outputs: security score, forecasts, threshold alerts, response options for DOE, Commerce, State, and DoD

9.2 Semiconductor Dependency Model (SDM)

Benchmark: 70 percent advanced node capacity in United States or allied territory

Indicators: Taiwan output, PRC subsidies, US fab build progress, export control enforcement, equipment chokepoints

Outputs: dependency risk score, Taiwan crisis scenarios, policy levers for NSC, Commerce, and Congress

9.3 Taiwan Strait Deterrence Model

Indicators: PRC deployment patterns, allied posture, missile readiness, information operations tempo, economic coercion signals

Outputs: deterrence stability score, escalation probability curves, integrated response options

9.4 Space Domain Vulnerability Model (SVM)

Indicators: redundancy mapping, anti-satellite testing patterns, debris risk, jamming activity, launch disruption patterns

Outputs: vulnerability score, crisis projections, prioritized mitigation actions

9.5 Artificial Intelligence Advantage Model (AIAM)

Indicators: compute availability, performance benchmarks, patent and publication density, government adoption, education pipeline, adversary releases

Outputs: AI national power score, gap analysis, projections under multiple investment scenarios

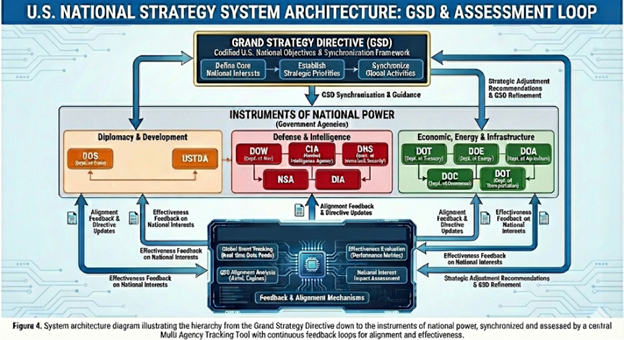

10. Interagency Roles and Synchronization

Operationalizing predictive grand strategy requires clear assignment of indicator responsibilities. The system does not remove missions. It clarifies who owns which indicators and which levers can be activated when thresholds are crossed.

Illustrative alignments include:

- State for alliance cohesion, diplomatic signaling, treaty compliance, political stability

- Defense for posture, readiness, deterrence metrics, experiments, space and cyber indicators

- DIA and the IC for intentions, mobilization indicators, escalation warning triggers

- Treasury for sanctions efficacy, currency patterns, coercion mapping, illicit finance networks

- Commerce for export controls, supply chain mapping, industrial capacity indicators

- DOE for critical minerals, grid resilience, semiconductor and quantum capacity assessments

- DHS and CISA for cyber patterns, infrastructure vulnerabilities, logistics anomalies

- FBI for counterintelligence and insider risk indicators

- NASA and Space Force for orbital awareness, vulnerability assessments, space traffic dynamics

For example, if China escalates a coordinated gray-zone campaign that combines cyber probing of U.S. port logistics systems, disinformation designed to trigger public distrust, and targeted economic pressure against key technology firms, TITAN ensures these signals are fused into one national picture rather than being treated as separate problems. DHS and CISA see the infrastructure risk, Treasury sees financial volatility and coercive patterns, Commerce sees supply chain disruption indicators, the Intelligence Community identifies intent and sequencing, and DoD assesses downstream readiness impacts. Instead of fragmented awareness, the NSC receives a single integrated alert showing what China is doing, which national interests are being degraded, which agencies own the levers, and what coordinated response options exist before disruption becomes strategic paralysis.

11. System Architecture and Technical Requirements

Technical Implementation Considerations for TITAN

For TITAN to function as a continuous national sensing and decision-support system, the technical architecture must operate across multiple security environments and integrate both classified and unclassified data streams without compromising sources, methods, or mission assurance. In practice, this requires a deliberate design that supports multi-level classification, controlled information sharing, and rapid dissemination of decision-relevant outputs to senior leaders. TITAN should be engineered as a federated architecture rather than a single monolithic database, enabling agencies to retain stewardship of sensitive data while contributing standardized indicators and model outputs into a shared national assessment layer.

A core requirement is cross-domain interoperability. Strategic competition with China produces signals that span intelligence reporting, cyber telemetry, economic data, diplomatic reporting, and commercial indicators. TITAN must therefore support controlled movement of derived outputs, not raw sensitive data, across classification boundaries. The goal is to ensure that the NSC and interagency leadership can access a unified strategic picture at decision speed, while the Intelligence Community and Department of War maintain appropriate compartmentation and tradecraft protections. In this design, the most sensitive inputs remain protected, while explainable index movements, thresholds, and trendlines can be shared broadly enough to synchronize action.

TITAN also requires a scalable data fusion pipeline capable of ingesting structured and unstructured sources at tempo. This includes government reporting, commercial datasets, and open-source intelligence, all normalized through disciplined extract-transform-load (ETL) processes and tagged to the integrated analytic framework. Automated entity resolution, event detection, and time-series tracking are necessary to connect disparate signals into coherent indicators. National strategic indexes must be updated on defined rhythms, while micro-models can refresh at higher frequency depending on domain volatility. This architecture should integrate with operational command-and-control environments and watch functions, enabling coordination between National Operations Centers, Joint Operations Centers, and interagency decision forums without creating parallel stovepipes.

Artificial intelligence and machine learning should be treated as analytic accelerators, not as autonomous decision engines. Machine learning is most valuable in identifying weak signals, detecting anomalies, forecasting trajectories, and surfacing correlations across large-scale data streams that exceed human bandwidth. However, TITAN must remain auditable and explainable. Every index shift and alert must be traceable to underlying indicators, weighting logic, and data provenance. Model drift detection, bias monitoring, and periodic validation must be built into governance cycles to preserve credibility and prevent false precision. Human judgment remains the decision authority, with TITAN providing structured foresight rather than automated prescriptions.

Finally, cybersecurity and resilience are not optional features. TITAN itself becomes a high-value target in strategic competition with China and must be protected accordingly. The architecture must include strong identity management, role-based access control, encryption, segmentation, continuous monitoring, and zero-trust principles. Data integrity controls are essential to prevent manipulation of indicators, poisoning of training data, or adversary-driven distortion of analytic outputs. In this sense, TITAN is not only a measurement tool. It is a strategic capability that must be defended with the same seriousness as other national mission systems.

U.S. National Strategy System Architecture: TITAN Highlighted as the Continuous Assessment and Synchronization Layer

Note. This figure shows the national strategy system architecture linking the Grand Strategy Directive (GSD) to the instruments of national power and the assessment loop. In Paper 3, the highlighted area emphasizes TITAN as the continuous measurement and decision-support system that ingests multi-domain data, generates strategic indexes and micro-model outputs, displays national posture through dashboards and alerts, and drives feedback and alignment mechanisms for whole-of-government synchronization.

TITAN requires a continuous data pipeline integrating structured, semi structured, and unstructured data from government, allied, commercial, and open source domains.

Core functions include:

- Real time ingestion of economic, cyber, diplomatic, military, space, and financial streams

- PMESII-PT tagging for environmental variables

- ASCOPE tagging for actors, organizations, infrastructures, and events

- DIMEFIL linkage for response instrument alignment

- Time series trend detection and model retraining

- Threshold alert logic that is explicit and auditable

- Simulation capabilities including scenario testing and agent based modeling

- Multi-level classification support with appropriate cross domain protections

- Integration with commercial and academic partners under governed agreements

The technical goal is not automation for its own sake. The goal is decision superiority through continuous strategic measurement and coordinated response options.

12. Operational Use Cases for Senior Decision Makers

Predictive architecture must change decisions to matter.

Crisis Early Warning

The Taiwan model detects converging indicators across military posture, coercion signals, and information operations. Escalation curves shift. The system alerts NSC, DoD, State, Treasury, and the IC with pre-tested option sets.

Supply Chain Oversight

Rare earth and semiconductor indexes detect investment slippage and adversary acceleration. A yellow threshold triggers coordinated interagency action and provides Congress legislative options tied to measurable posture deterioration.

Hybrid Cyber Response

The National Resilience Index detects simultaneous cyber probing, narrative manipulation, and market instability signals. DHS, FBI, Cyber Command, and Treasury see the same integrated picture early enough to act before crisis consolidation.

Space Integrity

The Space Superiority Index detects a pattern of counterspace testing and interference. Vulnerability moves from green toward yellow. Mitigation and deterrence signaling options are tested through simulation and presented coherently.

13. “Implementation Considerations”

Implementation of TITAN requires more than software. It requires an operational model built on cross-domain tradecraft, disciplined interagency communication, and a standing assessment rhythm that can translate national interests into measurable indicators and coordinated action. The technical architecture will only succeed if it is matched by an integrated team construct that synchronizes intelligence analysts, planners, policy experts, data scientists, data engineers, and cyber defenders into a single workflow that produces timely, decision-relevant outputs for senior leaders. This is not theoretical. It reflects how real strategic decision systems must function under continuous pressure. As the Deputy for the CENTCOM J3/Operations Interagency Group, I led national-level assessments and predictive analysis for the Middle East and coordinated operational alignment across U.S. government agencies and the Department of Defense (now Department of War). I also directed the Joint Operations Center at the strategic level, maintaining real-time situational awareness and decision-support information for the CENTCOM Commander. TITAN applies that same operational discipline at the national level by fusing indicators, enforcing shared standards, and enabling synchronized whole-of-government response across all priority national interests, not through episodic briefings, but through a persistent, measurable, and actionable strategic picture.

14. Why This System Is Unique

Commercial and government analytics platforms can ingest data, enable search, visualize relationships, and support operational workflows. Those capabilities are valuable and often necessary. However, TITAN is designed to solve a different problem: not data management, but national-level strategic measurement and synchronization.

TITAN is unique in five ways. First, it translates enduring U.S. national interests into explicit, quantifiable benchmarks that define what “success” and “deterioration” mean in measurable terms. Second, it computes national posture across the instruments of national power through continuously updated strategic indexes rather than periodic narrative assessments. Third, it incorporates domain-specific micro-models that generate higher-resolution early warning and probabilistic trajectories, enabling leaders to see risk accumulation before it becomes irreversible. Fourth, it synchronizes interagency assessment cycles around shared indicators and threshold triggers, forcing unity of effort and reducing response latency. Fifth, it links measurement directly to a Grand Strategy Directive, ensuring that analysis is anchored to national priorities rather than to disconnected departmental objectives.

In short, TITAN is not simply an analytic dashboard or a data integration environment. It is a national strategic operating system designed to measure competitive advantage, reveal strategic drift, and orchestrate whole-of-government action toward objective outcomes.

15. Limitations and Risks

America’s competitors are not waiting for declared war to shape strategic outcomes. They are running continuous campaigns designed to accumulate advantage while the United States remains trapped in episodic assessment cycles. China is executing an integrated national strategy that fuses industrial policy, technology acquisition, information control, cyber pressure, economic coercion, and military modernization into a single competitive system. It is positioning itself to dominate critical supply chains, constrain U.S. options through dependencies, and shape global narratives in ways that weaken alliance cohesion and democratic confidence. Russia applies a complementary model optimized for disruption: coercive energy leverage, gray zone operations, cyber sabotage, political warfare, and persistent intimidation intended to fracture Western unity and exhaust political will. Iran advances influence through proxy networks, coercive escalation, and regional destabilization calibrated to stay below thresholds that trigger decisive response. North Korea sustains strategic relevance through missile development, cyber theft, and crisis manufacturing that forces attention and resources out of proportion to its economic weight. The cumulative effect is that adversaries gain ground through tempo, integration, and persistence, not just through battlefield victory. If the United States does not build a continuous national capability to measure risk accumulation, detect strategic drift early, and synchronize whole-of-government action, it will continue to experience the same pattern: surprise at inflection points, fragmented response, reactive resource allocation, and the slow realization that strategic losses occurred gradually, then suddenly, and often without a single decisive moment to reverse them.

Several constraints must be acknowledged.

Data quality varies across domains. Indicator selection reflects analytic judgment. Quantification risks false precision if leaders misinterpret scores as deterministic forecasts.

Interagency barriers including classification, culture, and resourcing can impede integration. Algorithmic bias and model drift require continuous auditing. For these reasons, the architecture is designed to augment strategic judgment rather than replace it.

16. Conclusion

This article provides a blueprint for operationalizing predictive grand strategy through national indexes and nested micro-models integrated across DIMEFIL, PMESII-PT, and ASCOPE. The system shifts the United States from episodic assessment to continuous strategic measurement and anticipatory governance.

In sustained great power competition, strategic advantage increasingly belongs to the actor that detects accumulation of risk early and coordinates response faster than competitors can consolidate advantage.

If the United States requires a Grand Strategy Directive, and if it must synchronize the instruments of national power, then it must build a system like TITAN or accept structural reactivity as a permanent condition.

The nation that masters predictive synchronization of power will shape the international system. The nation that does not will be shaped by others. The United States must be the former.

Appendix A. Illustrative Predictive Dashboard Concepts (Draft TITAN)

Figure 1. Global Strategic Competition Map (Concept)

Global map highlighting United States alliances, Chinese Belt and Road corridors, Russian influence zones, chokepoints, semiconductor hubs, strategic mineral regions, and undersea cable clusters. Includes Indo-Pacific and Arctic inset maps.

Figure 2. Strategic Power Dashboard (Illustrative Mockup)

A “wall of dashboards” concept including:

- Strategic Power Index by actor

- DIMEFIL comparative widget

- Critical technology and resource posture widget

- PMESII-PT posture snapshot

- Active alert panel

Table 1. DIMEFIL Comparative Snapshot (Illustrative)

| Instrument | Subcomponent | United States | China | Russia |

| Diplomatic | Alliance Network Strength | 92 | 68 | 55 |

| Diplomatic | Multilateral Influence | 88 | 72 | 50 |

| Information | Global Narrative Reach | 85 | 80 | 60 |

| Information | Disinformation and Influence Tools | 78 | 86 | 74 |

| Military | Power Projection Capacity | 94 | 82 | 68 |

| Military | Readiness and Sustainment | 88 | 76 | 60 |

| Economic | GDP Scale and Diversity | 93 | 89 | 63 |

| Economic | Innovation and Productivity | 90 | 85 | 55 |

| Financial | Currency and System Centrality | 96 | 70 | 45 |

| Financial | Sanctions Leverage | 94 | 60 | 40 |

| Intelligence | Global Collection Reach | 92 | 78 | 65 |

| Intelligence | Analytic and Fusion Capacity | 90 | 75 | 62 |

| Law Enforcement | Global Law Enforcement Reach | 88 | 65 | 50 |

| Law Enforcement | Counterintelligence and Internal Security | 86 | 82 | 70 |

Table 2. Critical Technology and Resource Posture (Illustrative)

| Index | Metric | United States | China | Russia |

| Rare Earth Security Index | Secure Non Adversary Refining Access | 72 | 85 | 45 |

| Semiconductor Advantage Index | Advanced Node Capacity Control | 90 | 72 | 35 |

| AI Competitiveness Index | Compute, Talent, and Innovation | 88 | 86 | 52 |

| Quantum Resilience Index | Quantum Safe Encryption and R and D | 82 | 80 | 48 |

| Space Superiority Posture Index | Resilient Space Architecture and SDA | 91 | 77 | 60 |

| National Resilience Index | Infrastructure, Cyber, and Societal Resilience | 84 | 70 | 58 |

Table 3. PMESII-PT Overview (Illustrative)

| Dimension | Description | United States | China | Russia |

| Political | Institutional Stability and Legitimacy | 82 | 75 | 58 |

| Military | Global Reach and Readiness | 94 | 82 | 68 |

| Economic | Scale, Diversity, and Resilience | 93 | 89 | 63 |

| Social | Cohesion and Internal Stability | 76 | 72 | 55 |

| Information | Information Environment Control and Integrity | 80 | 84 | 62 |

| Infrastructure | Critical Infrastructure Condition | 86 | 80 | 60 |

| Physical Terrain | Home Terrain Advantages and Vulnerabilities | 78 | 83 | 76 |

| Time | Strategic Time Advantage | 81 | 84 | 60 |

Table 4. Strategic Power Ratios (United States Baseline = 1.0)

| Domain | Ratio US to China | Ratio US to Russia |

| Overall Strategic Power Index | 1.0 : 0.90 | 1.0 : 0.69 |

| Military Power Projection | 1.0 : 0.87 | 1.0 : 0.72 |

| Financial System Power | 1.0 : 0.73 | 1.0 : 0.47 |

| AI Competitiveness | 1.0 : 0.98 | 1.0 : 0.59 |

| Quantum Resilience | 1.0 : 0.98 | 1.0 : 0.58 |

| Space Superiority | 1.0 : 0.85 | 1.0 : 0.66 |

| National Resilience | 1.0 : 0.83 | 1.0 : 0.69 |

PROFESSIONAL AUTHOR SUMMARY

About the Author

Col Tony Thacker, USA (Ret.), is a national-security strategist and senior advisor to U.S. government organizations. He currently serves as Vice President at i3Solutions, a cutting-edge technology and analytics firm, where he leads work in strategic planning, predictive modeling, and interagency integration.

A retired special-operations officer with multiple combat deployments, Col Thacker has held leadership and policy roles across joint, interagency, and multinational environments. His work focuses on integrating frameworks such as DIMEFIL, PMESII-PT, and ASCOPE with predictive analytics, building decision-advantage tools for strategic competition. He has published widely on foresight, national resilience, and grand-strategy modernization, including articles featured at i3CA.com.

Col Thacker is a doctoral candidate at the European Institute for Management & Technology (EIMT), where his research advances the development of a unified grand-strategy system for the United States linking classical strategic theory with modern AI enabled foresight.

His views are his own and do not represent any government agency.

Disclaimer

The views expressed are those of the author and do not represent the official position of any government agency.

Appendix A. Acronyms and Key Terms

AIAM (Artificial Intelligence Advantage Model). A TITAN micro-model that estimates AI national power by measuring compute, benchmarks, innovation density, and adoption indicators.

AICI (Artificial Intelligence Competitiveness Index). A proposed index that summarizes AI advantage using measures such as compute, talent, and innovation.

ASCOPE (Areas, Structures, Capabilities, Organizations, People, Events). A tagging and analysis framework used in TITAN to represent actors, organizations, infrastructure, and events within the operating context.

Benchmarks (National Interest Benchmarks). Quantified targets that define adequate posture for a national interest and enable scoring, trend analysis, and accountability.

Back-Testing. A validation method that compares index behavior against known historical events to test credibility and reduce false precision.

Cross-Domain Interoperability. The capability to integrate derived outputs across security boundaries so senior leaders can access a unified strategic picture at decision speed.

Data Fusion Pipeline. The ingestion and integration process that combines structured and unstructured data into usable indicators, indexes, and alerts.

DIMEFIL (Diplomatic, Informational, Military, Economic, Financial, Intelligence, Law Enforcement). The instrument framework used in TITAN to align response options and clarify which agencies own which levers.

Explainability Packet. A required output that shows which indicators moved, how weighting contributed, and why a score changed.

Federated Architecture. A system design where agencies retain stewardship of sensitive data while contributing standardized indicators and outputs into a shared assessment layer.

Leading Indicators. Forward-looking measures used for early warning and anticipatory action, distinct from lagging indicators that validate outcomes.

Micro-Models (Domain-Specific Micro-Models). High-resolution analytic modules focused on discrete problems like rare earth exposure, semiconductor chokepoints, AI capacity, or Taiwan deterrence stability.

Model Drift. Degradation in model performance over time due to changing conditions, requiring continuous auditing and retraining.

Multi-Level Classification. A capability that supports outputs across classification levels with appropriate controls and cross-domain protections.

NRI (National Resilience Index). A proposed index that detects risk convergence across infrastructure, cyber probing, information manipulation, and market instability signals.

PMESII-PT (Political, Military, Economic, Social, Information, Infrastructure, Physical Environment, Time). The macro-environment framework used for tagging and trend detection in TITAN.

QRI (Quantum Resilience Index). A proposed index measuring quantum-safe posture and the resilience of encryption and communications to quantum disruption.

RESM / Rare Earth Security Index. A micro-model or index that measures access to non-adversary refining and vulnerability to coercion through mineral dependencies.

Scenario Testing. A simulation function that explores alternative futures and response options, including agent-based modeling.

Semiconductor Advantage Index. A proposed index measuring advanced-node capacity control and related strategic technology posture.

SPI (Strategic Power Index). A summary scoreboard index that compares national power posture across actors and across DIMEFIL components.

SSPI / Space Superiority Index. A proposed index that detects counterspace threat patterns and measures resilient space posture and vulnerability.

Thresholds (Green/Yellow/Red/Watch). Mathematically defined review triggers linked to benchmarks that generate alerts and drive structured interagency review.

Time Series Trend Detection. Analytical methods that track movement over time to detect acceleration, volatility, and inflection points in strategic posture.

TITAN (Total Integrated Threat Assessment Network). A national decision-support system that measures national interests in real time using benchmarks, indexes, and micro-models to enable synchronized action.

Weighting Strategy. A structured method for assigning indicator weights using expert input, back-testing, sensitivity testing, and governance review to prevent parochial bias.